Kubernetes, Local to Production with Django: 3 - Postgres with Migrations on Minikube

So far, we have created and deployed a basic Django app to the minikube Kubernetes cluster. However, to experience the true power of Django, it needs to be connected to a persistent datastore such as a PostgreSQL database.

The code for this part of the series can be found on Github in the part_3-postgres branch.

Edits

- The following updates were made in July 2019 - added

storageClassName: manualto thePersistentVolumeandPersistentVolumeClaimspec files. - Made the following major updates in November 2020 - updated to

python 3.8, updated toDjango 3.1.2, updated topostgres 13, added a run script for migrations as opposed to jobs.

Objectives

The objectives for this tutorial are:

- To utilize the Secret resource API to handle credentials used by the PostgreSQL and Django pods.

- To utilize the Persistent Volume subsystem for PostgreSQL data storage.

- Initialize PostgreSQL pods to use the persistent volume object.

- Expose the database as a service to allow for access within the cluster.

- Update the Django application in order to access the PostgreSQL database.

- Run migrations using the Job API and alternatively as a shell command using a deployed pod.

Requirements

Some basic knowledge of Kubernetes is assumed, if not, refer to the previous tutorial post for an introduction to minikube.

Minikube needs to be up and running which can be done by:

$ minikube startThe minikube docker daemon needs to be used instead of the host docker daemon, this can be done by running:

$ eval $(minikube docker-env)To view the resources that exist on the local cluster, the minikube dashboard will be utilized using the command:

$ minikube dashboardThis opens a new tab on the browser and displays the objects that are on the cluster.

From the github repo, the Kubernetes manifest files can be found in:

$ kubernetes_django/deploy/..The rest of the tutorial will assume the above is the current working directory when applying the Kubernetes manifests.

TL;DR

To get the tutorial code up and running, execute the following sequence of commands:

# Setup project

$ git clone git@github.com:gitumarkk/kubernetes_django.git

$ cd kubernetes_django/deploy/kubernetes/

$ git checkout part_3-postgres# Configure minikube

$ minikube start

$ eval $(minikube docker-env)

$ minikube dashboard # Open dashboard in new browser# Apply Manifests

$ kubectl apply -f postgres/

$ kubectl apply -f django/# Show service in browser

$ minikube service django-service # Wait if not ready# Delete cluster when done

$ minikube delete

1. Setting up PostgreSQL

1.1 What is PostgreSQL?

PostgreSQL is a popular open source Object Relational Database Management System with a strong reputation for reliability, data integrity and correctness. It’s the database of choice for many Django projects as it’s easy to integrate.

Even though we are going to install a database within a Kubernetes cluster, my personal preference is to use a database as a service offering such as AWS RDS. Such services provide features that can be hard to implement and manage well, which include:

- Managed high availability and redundancy.

- Ease of scaling up or down (i.e. vertical scaling) by increasing or decreasing the computational resource for the database instance to handle variable load.

- Ease of scaling in and out (i.e. horizontal scaling) by creating replicated instances to handle high read traffic.

- Automated snapshots and backups with the ability to easily roll back a database.

- Performing security patches, database monitoring etc.

However, there exists certain scenarios where a cloud native database solution is not feasible and thus Kubernetes is used to manage the database instances e.g. when running locally or on premise.

1.1 Persistent Volumes

Data in PostgreSQL needs to be persisted for long term storage. The default location for storage in PostgreSQL is /var/lib/postgresql/data. However, Kubernetes pods are designed to have ephemeral storage, which means once a pod dies all the data within the pod is lost. To mitigate against this, Kubernetes has the concept of volumes. There are several volume options, but the recommended type for databases is the PersistentVolume subsystem.

Kubernetes focuses on loose coupling, it aims to decouple how volume is provided on the platform vs how it’s consumed by pods. This is because the volume provision definition is usually dependent on the platform the cluster is running on e.g. the volume definition for cloud natives solutions might be different from on premise solutions. As a result, Kubernetes provides two API resources which are the PersistentVolume and PersistentVolumeClaim.

PersistentVolume

A PersistentVolume (PV) resource is a piece of storage on the cluster analogous to a node being either a physical device or a VM. It has a different lifecycle to a pod and it captures the details of how storage is implemented e.g. NFS, iSCSI, or a cloud-provider-specific storage system.

In layman’s terms, the PV says, “I am going to create 100 GBs of memory on the cluster based on a platform specific storage class e.g. AWS Elastic Block Storage, I have no idea who is going to use it, but whoever wants it can get it”. The manifest file to create a PV is:

Taking a logical walk through the manifest file:

- The

spec: capacity: storagefield states how much capacity to create. - The

spec: accessModesdefines how the pod will utilize the volume - The

spec: hostPathfield states that the volume should in the local file system. This is ideal for minikube but is not ideal for a production application. (In Mac OS it’s tempting to set thehostPathas aUsers\..\<data>path, however, from experience setting a root path other than\data\prevents the Postgres pod from initializing due to a permissions issue, I am not sure if it’s a bug or a constraint).

To create the volume, run the following:

$ kubectl apply -f postgres/volume.yamlTo get the volume details, the command to execute is:

$ kubectl get pvNAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGEpostgres-pv 2Gi RWO Retain Bound default/postgres-pvc standard 3m

This result can also be ascertained by viewing the minikube dashboard.

PersistentVolumeClaim

A PerstentVolumeClaim (PVC) is a request for storage by the user and allows the user to consume abstract storage resources on the cluster. Claims can request specific sizes and access modes in the cluster. In layman’s terms, the PV says, “I want 2 GBs of memory somewhere on the network, I don’t know where it is or how is it’s made but I assume it exists and I want to lay a claim to it”. In this way the volume definition and volume consumption remains separate. The manifest to create a PVC is:

To create the volume claim, run the following:

$ kubectl apply -f postgres/volume_claim.yamlTo see our created persistent volume claim, we run:

$ kubectl get pvcNAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGEpostgres-pvc Bound postgres-pv 2Gi RWO standard 3m

1.2 Postgres Credentials

In order to create the Postgres database, credentials are required as well as when accessing the database in our Django application. Furthermore, we don’t want the credentials to be stored in version control or directly on the image. To this end, Kubernetes provides the Secret object to store sensitive data. A Secret object can be created using the declarative file specification or from the command line. For this section, we will use the declarative file specification.

The points of interest are the data: user field as well as the data: password field. These contains base64 encoded strings where the encoding can be generated from the command line by running:

$ echo -n "<string>" | base64It’s worth noting even though base64 is an encoded format, it’s not encrypted and care should be taken when managing the secrets file. The Secret object is then added to the kubernetes cluster using:

$ kubectl apply -f postgres/secrets.yamlThe result can be ascertained by viewing the minikube dashboard.

1.3 Postgres Deployment

The Postgres manifest for deployment is similar to the Django manifest with the exception that we use the postges:9.6.6 container image which will be fetched from the hub.docker.com registry. The database credentials stored in the Secret object is also passed in and the volume claim is mounted into the pod. The PersistentVolumeClaim is mounted to var/lib/postgresql/data which is the default location used to store the database, however the data is physically stored in the PersistentVolume.

The deployment is created in our cluster by running:

$ kubectl apply -f postgres/deployment.yamlThe result can be ascertained by viewing the minikube dashboard, but can take a few minutes to complete successfully.

1.4 Postgres Service

The postgres service is similar to the Django service we described in the previous tutorial, and the configuration file is as follows.

Kubernetes allows the use of the service name i.e. postgres-service for domain name resolution to the pod IP. This means that instead of an IP address we will use postgres_service as the host in order to find our deployed database instance from Django.

The beauty of using Kubernetes, is that with a slight modification to the above Service description, we can use a database external to the cluster, and as long as the service name remains constant, Kubernetes will be able to find the external database. We will investigate this in detail when we focus on deploying the Kubernetes cluster to cloud native solutions (see part 5 of this series). To add your Postgres service to the Kubernetes cluster, run:

$ kubectl apply -f postgres/service.yamlThe result can be ascertained by viewing the minikube dashboard.

2. Django

2.1 settings.py

By default, Django uses the sqlite database configuration. To update the database configuration, the following modifications needs to be made to the DATABASES variable in the settings.py file.

DATABASES = {

'default': {

'ENGINE': 'django.db.backends.postgresql',

'NAME': 'kubernetes_django',

'USER': os.getenv('POSTGRES_USER'),

'PASSWORD': os.getenv('POSTGRES_PASSWORD'),

'HOST': os.getenv('POSTGRES_HOST'),

'PORT': os.getenv('POSTGRES_PORT', 5432) }

}

The database credentials will be inferred from the environment variables as it’s not a good idea to store them directly in the settings file which is to be checked into version control.

In addition, the django-health-check python module is used to determine whether a successful connection to the database has been made. In a more advance configuration, the module provides convenience endpoints which can be utilized by Kubernetes to determine the health status of a pod.

Once this changes have been made, the django needs to be built again using:

$ docker build -t <IMAGE_NAME>:<TAG> .Note, this should be conducted within the minikube docker daemon to provide local docker image discovery by kubernetes. The <TAG> parameter should be different from the previous build to allow the deploment to be updated in the cluster.

2.1 Updating the Django Deployment

Once the Django docker image has been built, we need to update our existing Django deployment in django/deployments.yaml to use the new Django image as well as provide the required environmental variables.

The update to the deployment file includes:

- Updating the project image to the new version.

- Setting the database credentials as environmental variables which will be passed into the settings file. The

POSTGRES_USERandPOSTGRES_PASSWORDvariables are extracted from the Secret object that was created earlier. ThePOSTGRES_HOSTvariable takes on the value of the Postgres service that we created. As was explained, Kubernetes is clever enough to map the postgres service name to the actual pod IP. - Add a bash script i.e.

run.shwhich contains the following commands to run the migrations (more on migrations later on) as well as to start a gunicorn server.

We now apply the updated deployment to the cluster using the kubectl apply -f <filename>.yaml . Once the deployment has been completed, we can check if the database has been configured. To do this we get the Django service name using.

$ kubectl get serviceAnd with the retrieved service name, we can use minikube to launch the service on the browser by running:

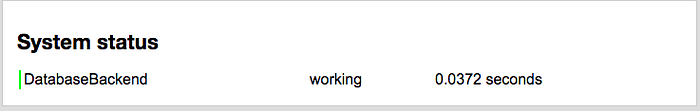

$ minikube service <service_name>This opens a browser with our running Django application. To see whether the database has been connected and initialized properly, we need to navigate to the health check endpoint, the path set for this project is / (to create your own, follow the django-health-check documentation). This should show the following status:

This is the expected result due to the fact the database has not been initialized properly. To initialize the database (assuming it has been set up properly), Django requires that Database migrations need to be run to set up the tables so that the health check can pass.

3 Database Migrations

Database migrations are utilized in many environments to update or revert database schema. There are a few ways to run database migrations, but for this tutorial we will focus on three ways, which are;

- Using a bash script as seen earlier (this is my usual go to).

- Using the Job API.

- Directly as a shell command via a pod.

All of them have their advantages and disadvantages which we will briefly touch on.

3.1 Using a bash script

This is the simplest way that I have found to run migrations. In my experience simply adding a bash script and initiating it as a container command (as seen earlier) prevents a lot of headaches and complexity and it is quite easy to automate. The following is an example with 2 simple commands, but can be more complex depending on the use case.

3.2 Job Resource

A Job creates a pod which runs a single task to completion. This task can be anything, but in our case, it is the Django migration. The job configuration file is:

The manifest to create a Job object is similar to a Deployment, with some slight modifications such as the migrations are run by setting the appropriate management command in the spec: template: spec: containers: command field.

The Job is scheduled on the cluster using:

$ kubectl apply -f django/job-migration.yamlTo see if the Job was completed, we need to get all the pods:

$ kubectl get pods --show-allNAME READY STATUS RESTARTS AGE$ django-migrations-sw5s4 0/1 Completed 0 1m$ django-pod-fdbc9bc9b-mkqt4 1/1 Running 0 28m$ postgres-deployment-946db9dfb-2xcxm 1/1 Running 0 28m

We can see that the django-migration job has been competed. The full name for the migration pod is then used for the logs which shows that the migration was completed successfully based on the logs.

$ kubectl logs django-migrations-sw5s4Operations to perform:

Apply all migrations: admin, auth, contenttypes, db, sessionsRunning migrations:

Applying contenttypes.0001_initial... OK

To confirm that Django is connected to the database, navigate back to the root url on the browser, and if everything worked out well, you should see the following.

The downsides of using the Job resource to run migrations, is that the migrations cannot be run again without modifying the manifest file i.e. by updating the image name. This should usually be the case when deploying new versions of the codebase online, however in the scenario where the migrations needs to be re-run with the same image, the Job object needs to be deleted from the server before it can be run again:

$ kubectl delete -f deploy/kubernetes/django/job-migration.yaml3.3 With the CLI

Migrations can also be executed from the shell of a running container using the kubectl exec command. In order to run the migrations, we need to get the name of the running pod of interest by:

$ kubectl get podsOnce the pod name has been found, the migrations can be run by:

$ kubectl exec <pod_name> -- python /app/manage.py migrateThis should show the same results as running the migrations using the job.

4. Conclusion

Now that we have seen how to install postgres and run on a Kubernetes cluster locally, the next step will be to add Redis for caching and as a message broker as well as adding Celery for asynchronous task processing.

If you have any questions or anything needs clarification, you can book a time with me on https://mbele.io/mark

5. Troubleshooting

During the course of this tutorial, I kept running into problems with minikube which include and not limited to:

- Minikube unable to open the dashboard or service in a new browser.

- Kubectl external service not exposed properly.

To fix this problems, on occasion I had to delete all my deployments and services, after which I restarted minikube and redeployed. On a particular extreme case I had to restart my laptop and repeat the steps for deploying.

6. Credits

- https://myjavabytes.wordpress.com/2017/03/12/getting-a-host-path-persistent-volume-to-work-in-minikube-kubernetes-run-locally/

- https://blog.bigbinary.com/2017/06/16/managing-rails-tasks-such-as-db-migrate-and-db-seed-on-kuberenetes-while-performing-rolling-deployments.html

- https://medium.com/google-cloud/deploying-django-postgres-and-redis-containers-to-kubernetes-part-2-b287f7970a33